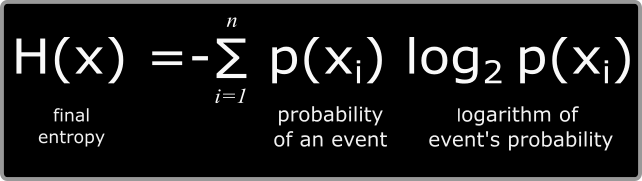

This entropy on the chosen word is defined as the average of the output weighted on the probability of occurrence of the characters. There will be an entropy for each character. If you consider a word, being a discrete source of the finite number of characters type which can be considered, for each possible character there will be a set of probabilities which would produce various outputs. He invented a great algorithm known as the Shannon Entropy which is useful to discover the statistical structure of a word or message. Shannon wrote the paper “ A Mathematical Theory of Communication“, which I strongly encourage to read for its clarity and amazing source of information. These are equal up to some numerical factors.By Jacobs, Konrad -, CC BY-SA 2.0 de, Link While the Shannon entropy of information theory is You may be familiar with information-storage mechanisms operating on this principle.įormally, the two entropies are the same thing. Once a ferromagnet has "chosen" an orientation, perhaps with the help of an external aligning field, that direction is stable as long as the temperature is substantially below the Curie temperature - that is, modest changes in temperature do not cause entropy-increasing fluctuations in the orientation of the magnet. A ferromagnet has a degenerate ground state: the atoms in the magnet want to align with their neighbors, but the direction which they choose to align is unconstrained. This argument suggests, by the way, that if you want to store information in a quantum-mechanical system you need to store it in the ground state, which the system will occupy at zero temperature therefore you need to find a system which has multiple degenerate ground states. The information entropy ("Shakespeare's Complete Works fill a few megabytes") tells me the minimum thermodynamic entropy which had to be removed from the system in order to organize it into a Shakespeare's Complete Works, and an associated energy cost with transferring that entropy elsewhere those costs are tiny compared to the total energy and entropy exchanges involved in printing a book.Īs long as the temperature of my book stays substantially below 506 kelvin, the probability of any letter in the book spontaneously changing to look like another letter or like an illegible blob is zero, and changes in temperature are reversible. Likewise, the thermodynamic entropy of the information in my Complete Works of Shakespeare is, if not zero, very low: there are a small number of configurations of text which correspond to a Complete Works of Shakespeare rather than a Lord of the Rings or a Ulysses or a Don Quixote made of the same material with equivalent mass. Likewise, even at tens of thousands of kelvin, the entropy stored in the nuclear excitations is zero, because the probability of finding a nucleus in the first excited state around 2 MeV is many orders of magnitude smaller than the number of atoms in your sample. So as you raise the temperature of your mole of argon from 300 K to 500 K the number of excited atoms in your mole changes from exactly zero to exactly zero, which is a zero-entropy configuration, independent of the temperature, in a purely thermodynamic process. There are 100 ways that I can have one heads-up and the rest tails-up there are $100\cdot99/2$ ways to have two heads there are $10 \cdot99\cdot98/6$ ways to have three heads there are about $10^ = 2500\rm\,K. The canonical example is a jar of pennies. If all the indistinguishable states are equally probable, the number of "microstates" associated with a system is $\Omega = \exp( S/k )$, where the constant $k\approx\rm25\,meV/300\,K$ is related to the amount of energy exchanged by thermodynamic systems at different temperatures.

The entropy $S$ in thermodynamics is related to the number of indistinguishable states that a system can occupy. I supposed you could claim otherwise if you assume "entropy is knowledge," but I think that's exactly backwards: I think that knowledge is a special case of low entropy. So Pratchett's quote seems to be about energy, rather than entropy.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed